Here’s a picture of tester dog, Laika, with Dr. James Whittaker’s new book, Exploratory Software Testing: Tips, Tricks, Tours, and Techniques to Guide Test Design. It showed up on my doorstep last week, and is my first free testing book ever (thanks Dr. Whittaker!)

In reading through Stephen Few’s new book, Now You See It,I came across a completely separate perspective of looking at graphics in an “exploratory” manner. I can literally hold a book preaching the value of “exploratory testing” in one hand and a book preaching the value of “exploratory analysis” in the other. They are the same concept. If you have ever wondered what interdisciplinary means, this is a great example of an interdisciplinary concept.

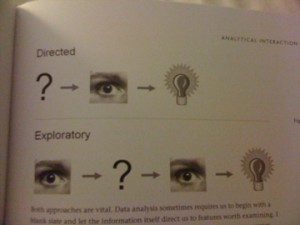

Stephen Few does a great job of explaining exploratory analysis with pictures:

Half of the people reading this now understand the underpants gnome tie-in. For those who don’t get it, here’s a link to the original South Park clip (NSFW).

Jokes aside, I’m going to start with the picture, and discuss what this says to me about testing and see if it meshes with what JW’s definition of exploratory testing. I will then look at how this applies to visualization. At the end, the two will either come together or not. At this point, I’m not sure if they will. I’ll just have to keep exploring until I have an answer or a comment telling me why my answer is crap (which is fine with me if you have a good point).

Starting with the picture and testing. I’m assuming the “?” means “write tests.” The eyeball means analyze. The light bulb is the decision of pass or fail. The illustration of directed analysis looks like the process HP Quality Center assumes. QC assumes you’ve primarily written tests and test steps before testing based on written requirements. Then you test. After you’ve tested, you have an outcome.

The second line for “exploratory” analysis looks like a much more cognitive and iterative process. This says that the tester has the opportunity to interact with the system-under-test (SUT) before formulating any tests(eyeball). After playing with the SUT, the tester pokes it with a few tests (“?”). At this point the tester may decide some stuff works and keep poking or decide that some stuff has failed and write defects(light bulb.) Chapter 2 of Exploratory Testing describes how JW defines exploratory testing: “Testers may interact with the application in whatever way they want and use the information the application provides to react, change course and generally explore the application’s functionality without restraint (16).” So far this is looking very similar.

Now that I’ve looked at how the exploratory analysis paradigm applies to testing, here’s how it applies to visualization. As an example visualization, I’m looking at a New York Times graphic, How Different Groups Spend their Day. When I open this graphic, I can see that it’s interactive, so I immediately slide my mouse across the screen. I notice the tool tips. Reading these gets me started reading the labels and eventually the description at the top. Then I start clicking. The boxes on the top right act as a filter. There is a also a filter that engages when a particular layer is clicked.

Few’s point in describing directed analysis vs. exploratory analysis is that in the wild, when we look at visualizations, we use exploratory analysis. It’s not like I knew what I was going to see before I opened the visualization. Few describes the process known as “Schneiderman’s mantra” (for Ben Schneiderman of treemap fame) in more detail saying that we make an overall assessment (eyeball), take a few specific actions (“?”), then reassess (eyeball). Although Few doesn’t say that there is a decision made at some point in this process, I’m assuming there is because of the light bulb in the picture (84).

Recently, Stephen Few asked for industry examples of people using visualization to do their work. Some of the replies were from the airline industry, a mail order warehouse and a medical center. Software engineers should be included in this mix and apparently from page 130 in JW’s book showing a treemap of Vista code complexity, already are. Given that both use the same form of exploratory analysis, I can see why.

Exploratory analysis of software testing and visualization diverge, however, when you look at the scale of data for which each is effective. Visualization requires a large dataset. This could be multiple runs of a set of tests or, as in JW’s example, analysis of large amounts of source code. Exploratory testing as JW describes can occur at a high level such as in the case of a visualization or at the level of an individual test.

One thing my exercise has shown me for sure is that I have to read more of Exploratory Testing.